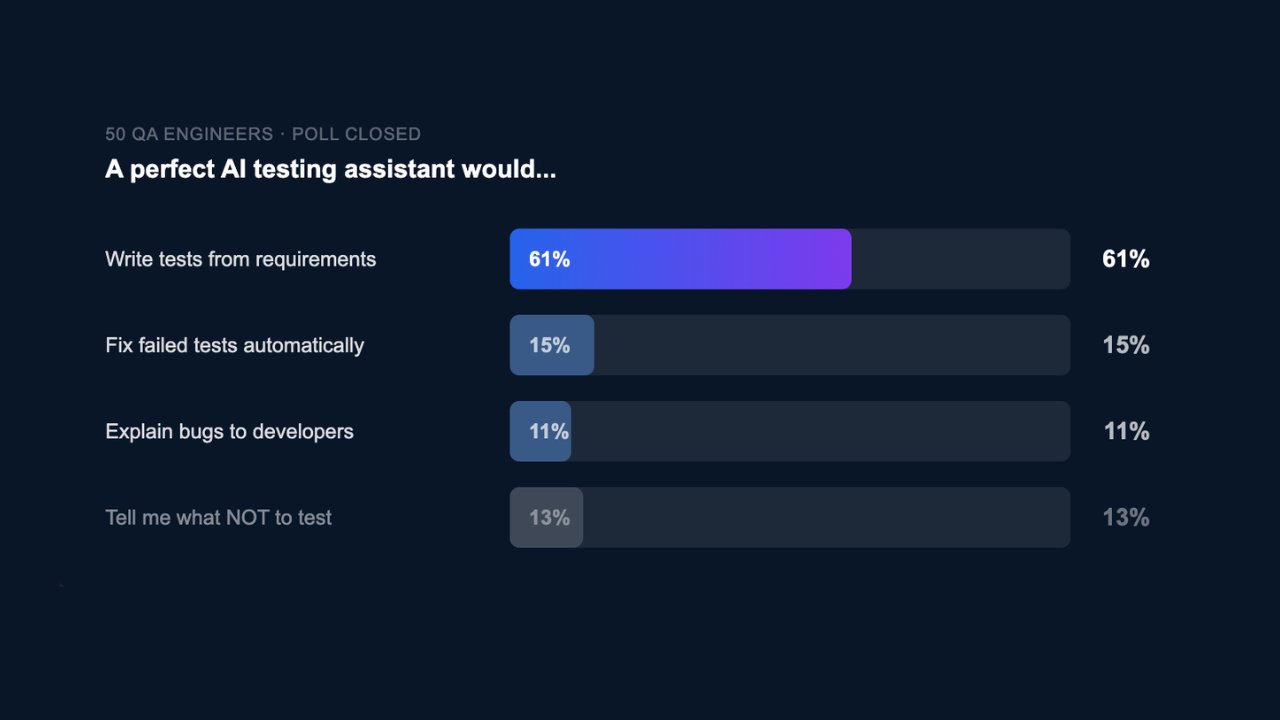

Last week, we ran a simple poll with one question: what should a perfect AI testing assistant do?

We gave four options. 50 engineers responded. And nearly two thirds chose the same one.

Generate tests directly from requirements.

Not fixing broken tests.

Not suggesting what to skip.

Not explaining bugs.

Write the tests from the requirement before a single line of code is touched.

This highlights a universal pain point: the blank page problem. Every QA engineer who has opened a Jira ticket and had to manually convert it into structured test cases knows how time-consuming, repetitive, and low-value this step can be.

What the other 39% revealed

The remaining responses complete the picture:

- 15%want failed tests fixed automatically. UI changes constantly break tests. Someone has to track down the issue, understand it, and rewrite the test — where automation often falls short.

- 13%want clarity on what not to test. Poor coverage decisions are costly: too much slows teams down, too little lets bugs slip through.

- 11%want bugs explained clearly to developers. A failing test is useless if it cannot be reproduced or understood.

These are not separate problems. They are four stages of the same workflow: write, run, maintain, communicate. Most teams struggle across all of them.

Three out of four already solved

Text2Test is built to address this entire workflow.

It connects to your product, design files, codebase, and tickets via MCP. It reads real, living requirements and generates structured test cases automatically, including happy paths, edge cases, and validation flows, in under five minutes.

Four pain points. One platform.